Safe & trusted Generative AI powered automation for regulated industries

Safe & trusted Generative AI powered automation for regulated industries

Level-up efficiency and user experience with the only conversational AI platform to safely harness the world's most advanced Generative AI & Large Language Models (LLMs)

Shorten time to value with digital assistants that are ready to go

OpenDialog provides out-of-the-box solutions for a wide range of conversational AI use cases in the healthcare and insurance sectors, designed to drive ROI from the get-go. We’ll integrate with your existing business systems and customize our digital assistants to your organization’s specific needs.

Break boundaries, not regulations

Leverage the world’s most advanced Generative AI safely in regulated sectors for a fluid, natural user experience that provides:

- Accurate, safe responses, free from hallucinations

- Transparent, explainable decisions

- Fine-grained controls over outputs

One platform to support your AI transformation journey

Far from simply automating repetitive tasks, OpenDialog is your strategic business asset, designed to support your digital transformation journey into the Generative AI age. With OpenDialog’s powerful data insights and our expert team behind you, you can automate up to 90% of interactions across your whole organization.

Conversational AI automation & customer intelligence software

Natural conversations, transformative outcomes

Safe & trusted AI

Built from the ground up for regulated industries, OpenDialog puts you in full control of your conversational applications, from the sources of knowledge, to the AI models employed, and the presentation of responses. Enjoy peace of mind with fully auditable and explainable data for every conversation and decision point. Rest assured, our platform never provides responses to questions it can’t understand.

Automate more

OpenDialog achieves higher levels of complex task completion without human intervention when compared to other conversational AI platforms thanks to its innovative context-first engine and multi-AI model capabilities. With the help of OpenDialog’s strategic data insights, we put you on the path to automate up to 90% of interactions across your whole business.

Faster & easier

Thanks to its adaptable nature, there’s no need to plan for every possible permutation, making OpenDialog faster and easier to deploy. Users can leverage pre-built industry-specific widgets and solutions, along with a user-friendly no-code development studio for effortless customization.

OpenDialog seamlessly adopts new AI models into existing applications, future-proofing your investment and keeping you ahead of your competitors.

Better customer experience

OpenDialog enhances customer experiences through its unique context-first AI model, enabling elevated levels of personalization within fluid, natural conversations.

With 24/7 availability and multilingual capability, OpenDialog ensures accessibility through voice, text, messaging, and mobile apps, catering to diverse user preferences and needs.

Detailed data insights

OpenDialog provides detailed insights that leverage a wide range of data points in every interaction. Users can create custom attributes, enriching auditable and explainable data for thorough analysis.

With a focus on continuous improvement, the platform provides robust analytics and dashboards, allowing users to measure and monitor trends effectively.

Front office process automation through GenAI-powered assistants

Out of the box solutions for regulated industries

Healthcare Automation Solutions

- Appointment scheduling & management

- Patient onboarding automation

- Health & wellness support

- Outpatient support

- Billing & insurance query automation

- and more

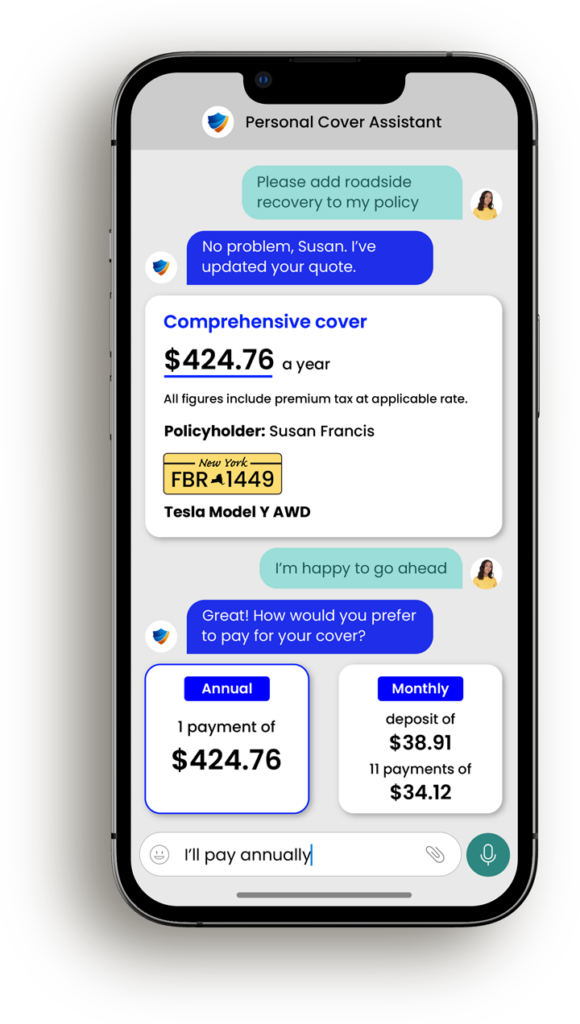

Insurance Automation Solutions

- Contact center automation

- Quote-to-bind journey

- Renewals

- Claims automation

- Mid-term adjustments

- and more

Customer success stories

82%

automation rate

25%

sales uplift

“OpenDialog’s team is top drawer, it’s been an absolute pleasure. Our process is now a lot quicker and we have more dialogue with customers, leading to associated savings and more opportunities to capture sales.”

Anthony Fletcher

Chief Operating Officer

VRG, Part of Davies Group

Start Your Generative AI Powered Transformation Journey Today